Why would we want subjective AI?

It may be both safer and more efficient.

Think about how you learn.

Do you memorize everything there is to know and then regurgitate it, or do you teach yourself how to think about and react to new experiences?

Think about playing sports. Does an athlete remember that when a ball arrives at 20 degrees they need to move 45 degrees and then apply 500 Newtons of force? Or do they remember “when I make these sorts of adjustments that feel this particular way I experience the following outcomes”?

Why do living beings have subjectivity at all?

It would be overwhelming to have to memorize everything there is to know simply to function in the world. We aren’t born with knowledge. Ground truth is not just lying around out there in the universe like a training dataset. Any new context is potential catastrophic collapse for a system that depends on purely objective learning.

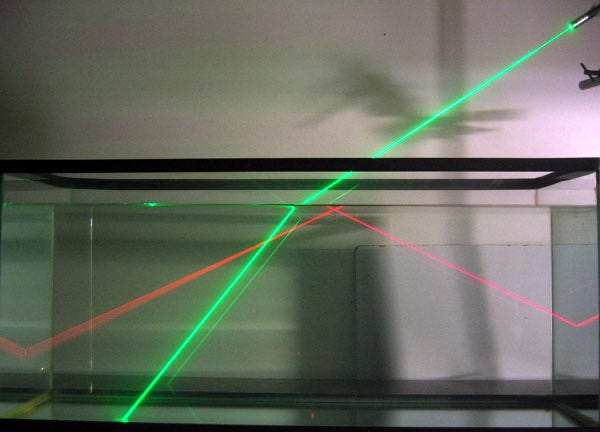

Subjective learning is not about memorizing information but instead about collecting experiences (qualia) and making contextual adjustments. A subjective system does not need to remember everything it perceives — an impossible amount of information. It only needs to remember how it was affected and how to adjust to minimize disruption in the future.

A subjective system optimizes for coherence and can operate even in conditions without a frame of reference. The “default” behavior is simply the system’s stable identity function without adjustments, and adjustments are differentials applied to its internal dynamics. This is literally what Minary mathematically does: it maintains response differentials across related experiences.

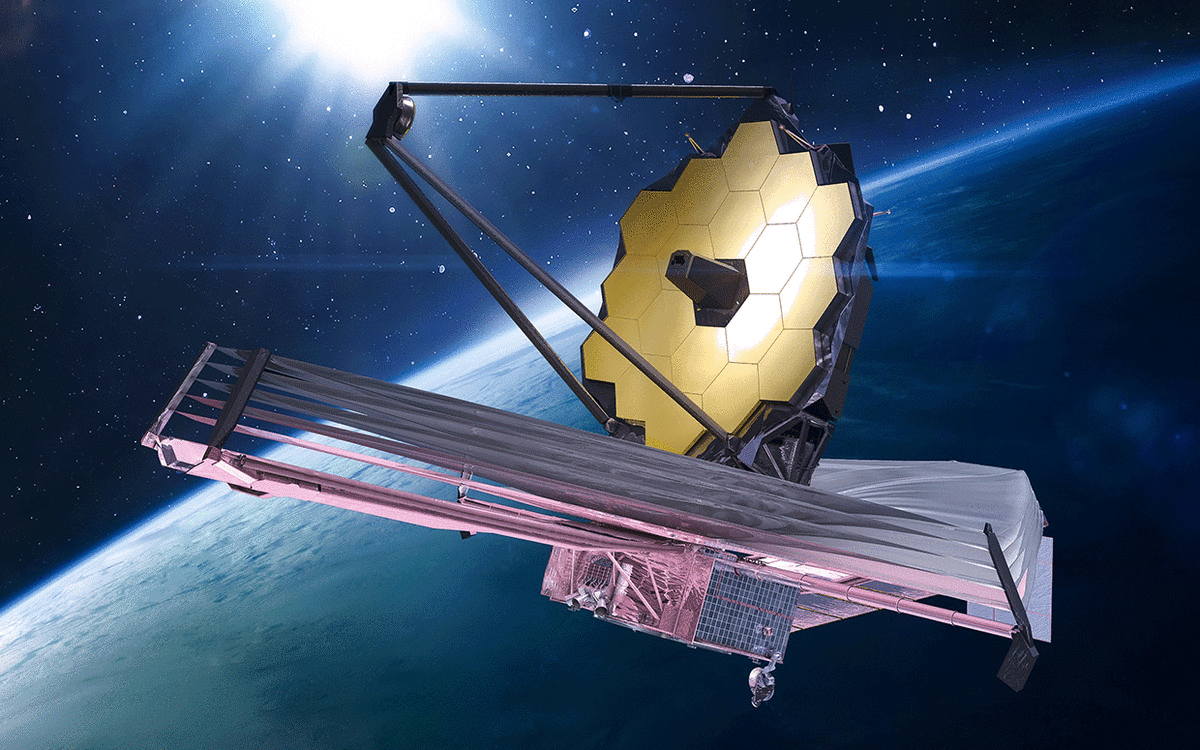

Think about a space probe hurtling towards a distant planet. It may lose connection to ground control or might encounter unforeseen conditions. A subjectively trained probe would carry on and do its best, making adjustments as it gains more context. An objectively trained probe might behave unpredictably, entering a feedback loop or halting entirely.

It is more efficient to document contextual changes in internal system states than it is to document the outside world. Subjectivity is a local projection of the outside as interpreted through a system’s own internal semantics. The “schema” for objective reality is infinitely complex. The schema for internal reality is consistent. The latency of the universe is infinite. Local latency is not.

I submit the notion that subjective computing is a new frontier in computer science enabled by primitives like Minary: a shift from choreographing behavior to maintaining states of being.

Do we want subjective computers?

Why are we naming our AI agents? Why are we giving them voices? Why are we using them to work through our human experiences?

Or, more simply, why do we customize our wallpapers or choose this color case over that?

Humans are adapted for inter-subjective, relational exchange. Subjectivity not only would make computers more efficient and robust, it makes them better tools for humans.

While a scientist or engineer may not want their spreadsheet to have subjectivity, it is clear that the majority of the population is trying to find different, subjective ways to work with computers. We are not accustomed to thinking about computers in these terms, but we clearly want to.