Visualizations to Accompany "The Minary Primitive of Computational Autopoiesis" Preprint

Yesterday my collaborator Dr. Colin Defant, Harvard mathematician and Benjamin Peirce Fellow, posted our paper...

“The Minary Primitive of Computational Autopoiesis”

https://arxiv.org/abs/2601.04501

I wrote the algorithm while Dr. Defant provided the mathematical rigor by formalizing its stability, convergence, and confirming of the very curious property that brought me down this rabbit hole in the first place!

This work dates all the way back to its humble days as a consensus algorithm back in 2016.

Here’s the abstract:

> We introduce Minary, a computational framework designed as a candidate for the first formally provable autopoietic primitive. Minary represents interacting probabilistic events as multi-dimensional vectors and combines them via linear superposition rather than multiplicative scalar operations, thereby preserving uncertainty and enabling constructive and destructive interference in the range [-1,1]. A fixed set of `perspectives’ evaluates `semantic dimensions’ according to hidden competencies, and their interactions drive two discrete-time stochastic processes. We model this system as an iterated random affine map and use the theory of iterated random functions to prove that it converges in distribution to a unique stationary law; we moreover obtain an explicit closed form for the limiting expectation in terms of row, column, and global averages of the competency matrix. We then derive exact formulas for the mean and variance of the normalized consensus conditioned on the activation of a given semantic dimension, revealing how consensus depends on competency structure rather than raw input signals. Finally, we argue that Minary is organizationally closed yet operationally open in the sense of Maturana and Varela, and we discuss implications for building self-maintaining, distributed, and parallelizable computational systems that house a uniquely subjective notion of identity.

------

I would like to provide additional color and commentary that did not make it into the paper. First and foremost, an image is worth a thousand words, so let’s take a look at some of the visualizations produced by the accompanying Python simulation.

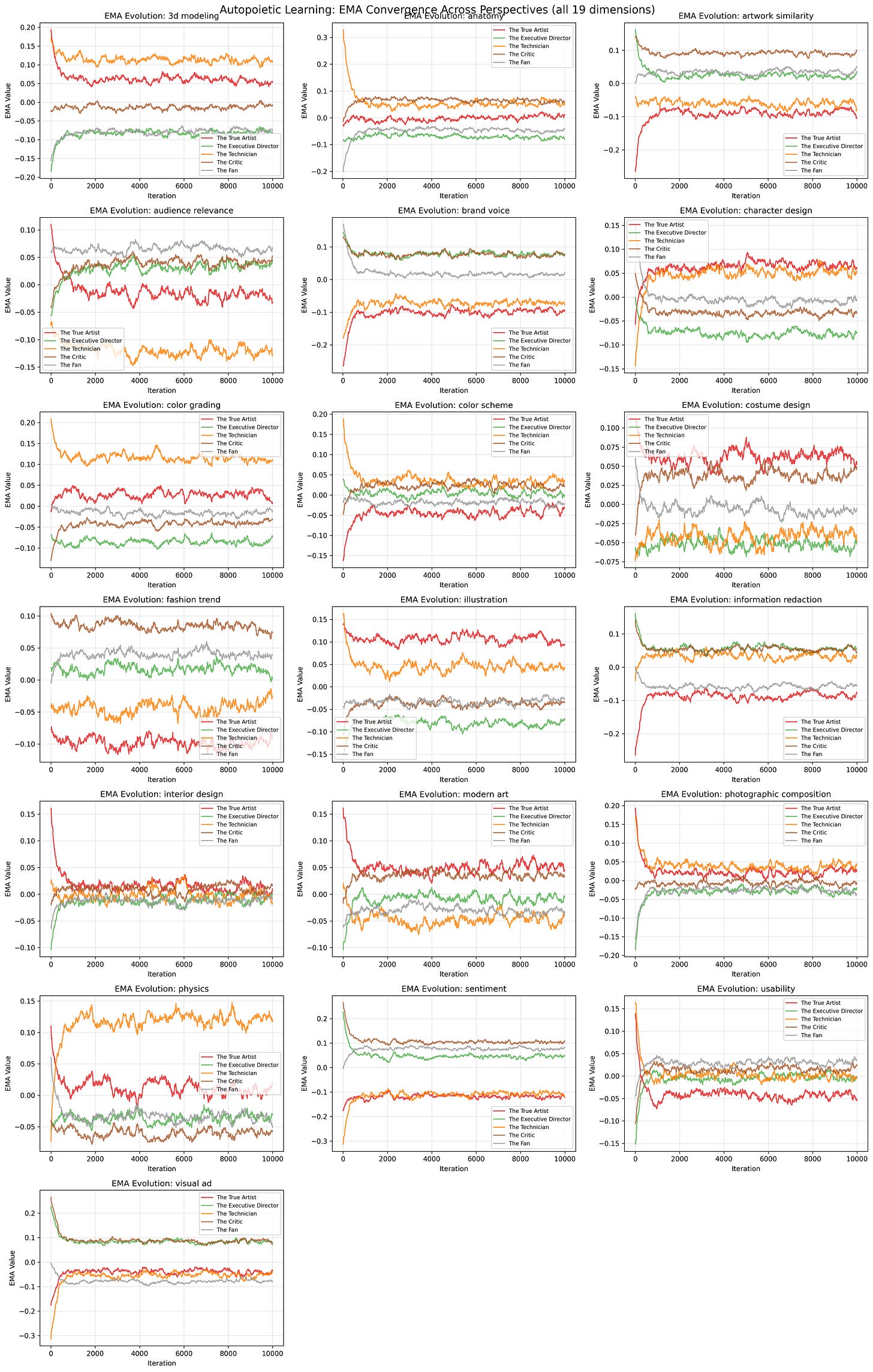

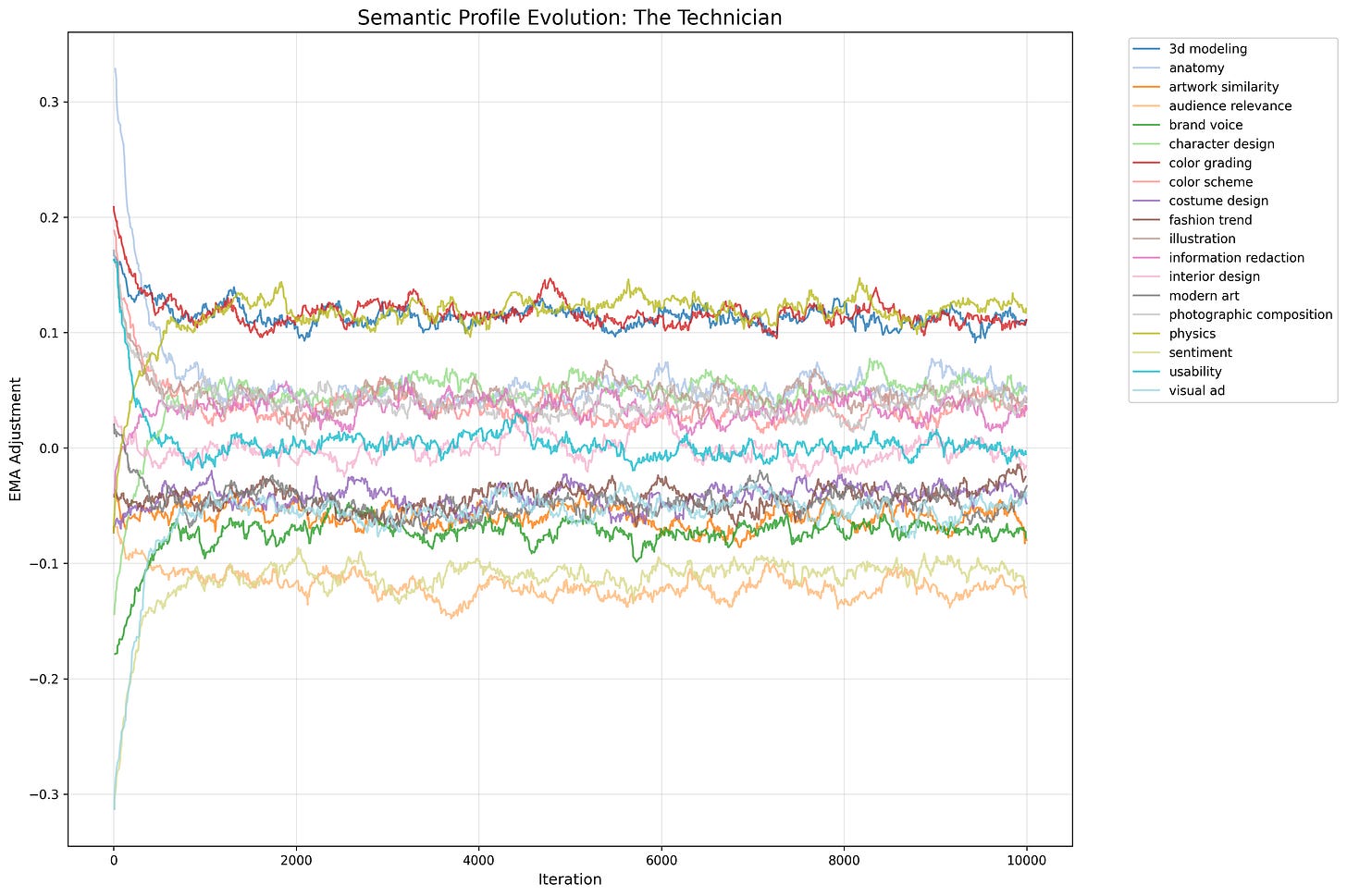

To quickly summarize the simulation: five perspectives with designed “archetypes” reflecting a combined matrix of 19 arbitrary and opaque “competency dimensions” interact iteratively, providing their responses to a stimulus signal, coupling the competency dimensions 3 at a time, and where the deviation of each adjusted response from the global mean is then added to a rolling exponential moving average for each (perspective, competency).

Here each colored line tracks how a single perspective's learned adjustment (Δ) evolves over 10,000 iterations for a given competency dimension.

As you can see, each perspective naturally finds its competencies in the system and loosely converge around flexible, dynamic attractors. Crucially, the convergence properties are purely relative to each other due to the zero-sum conservation properties of the Minary framework. I like to think of this relative-only property as subjectivity.

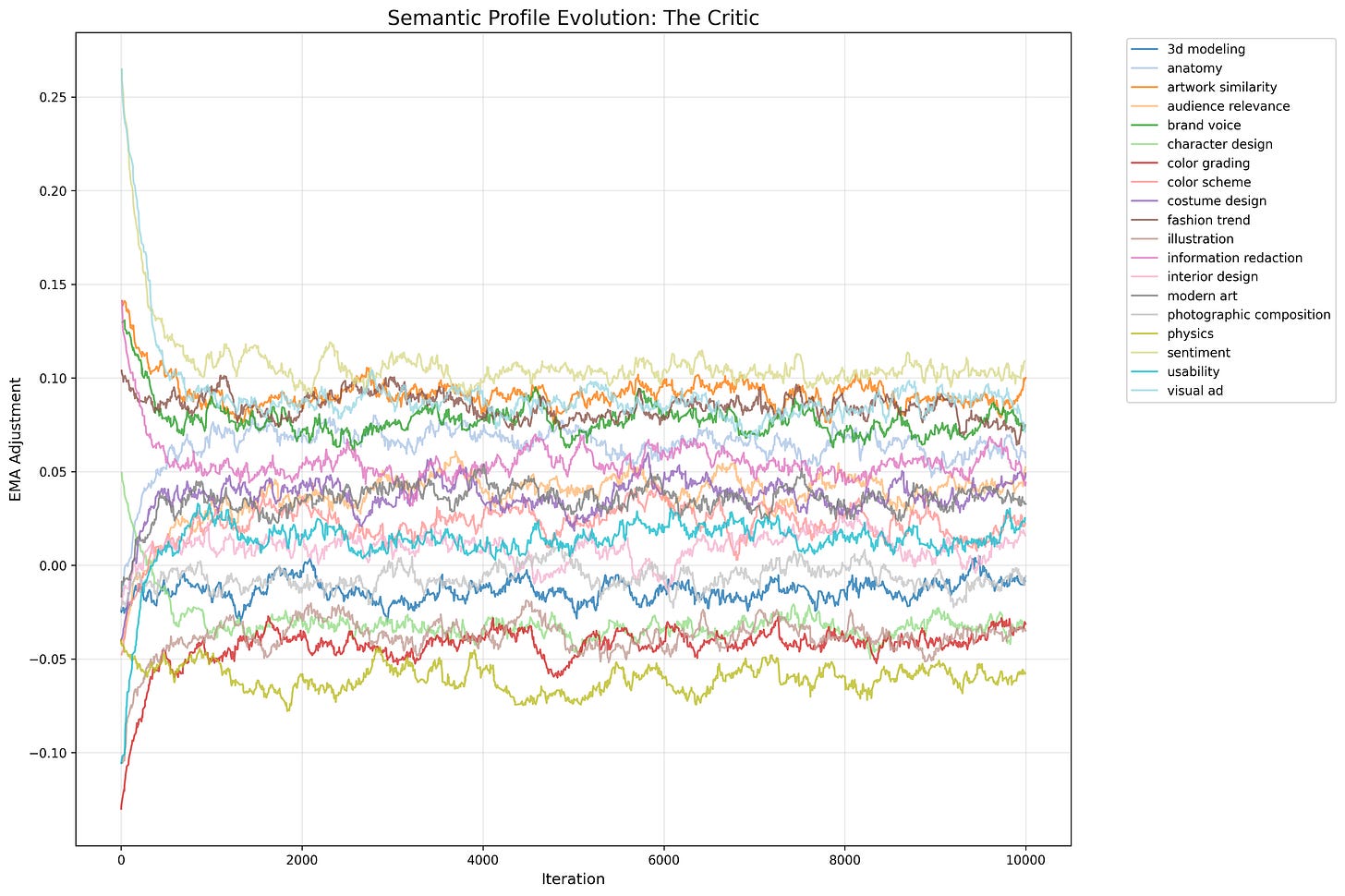

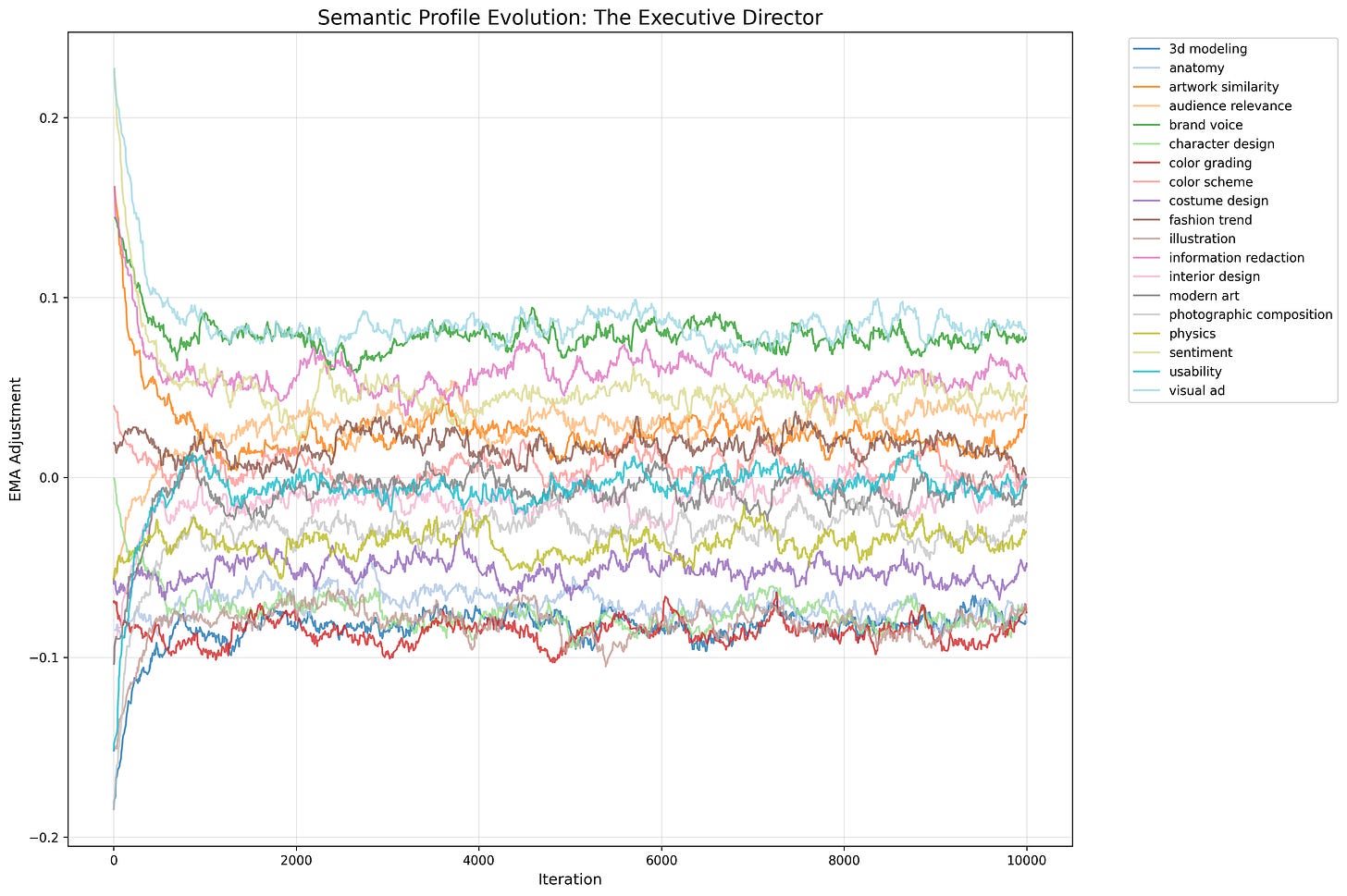

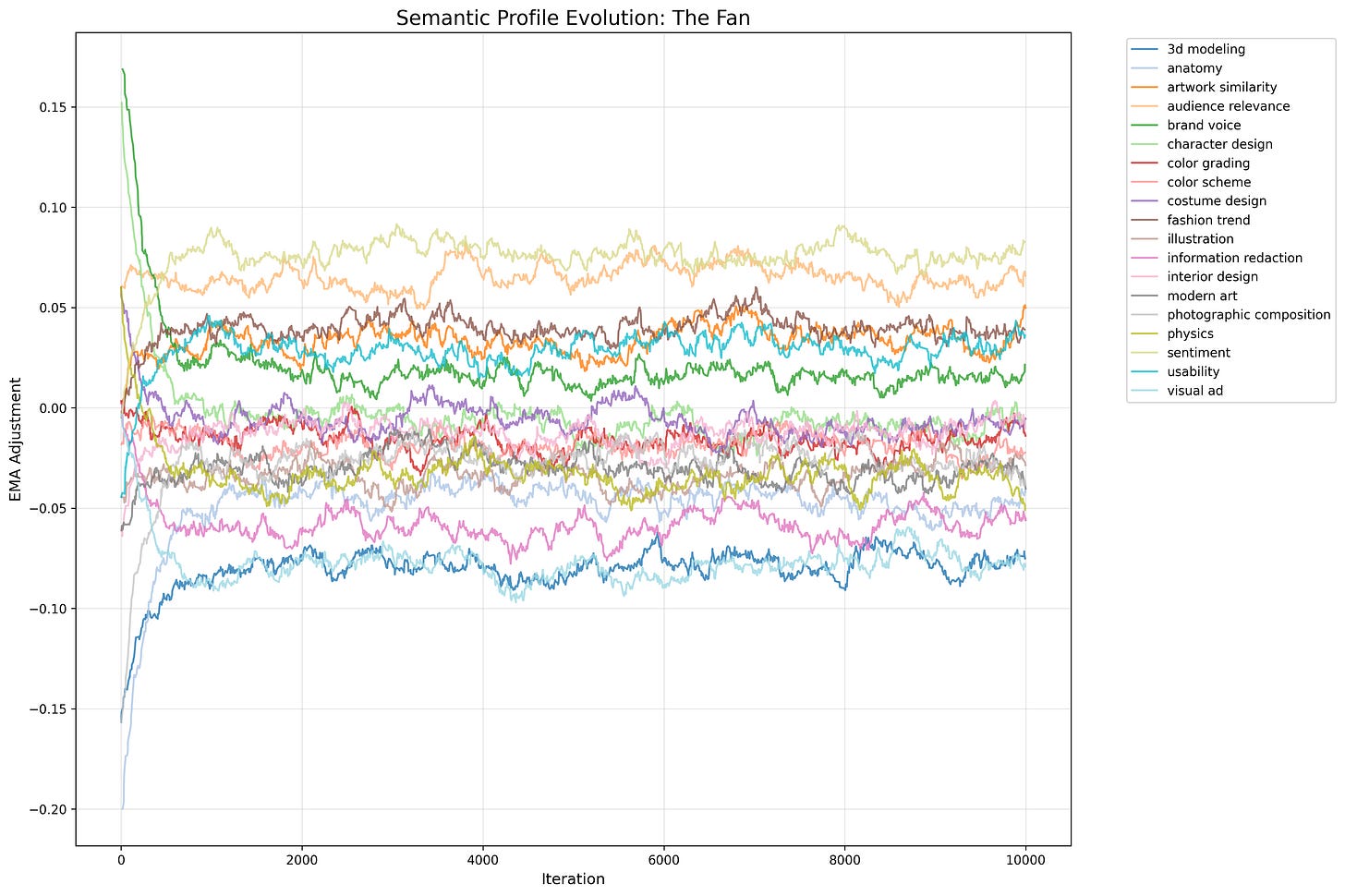

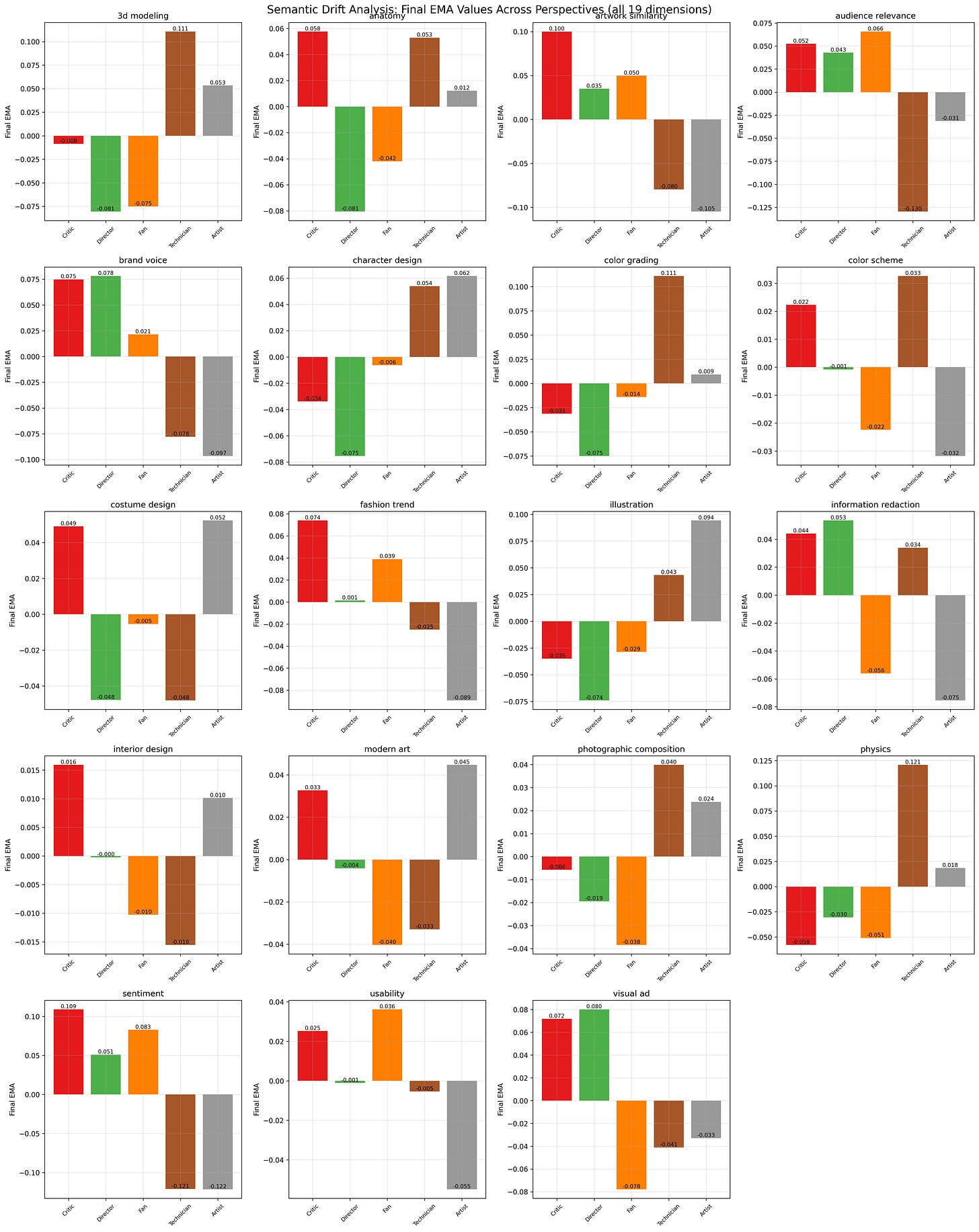

A closer look at the plot of each individual perspective provides greater insights into each perspective’s competency “DNA”. Each perspective retains its overall archetypal disposition while also adjusting for its relative identity within the context of the group. Isolating each perspective reveals its distinct "fingerprint" based quite a bit on its inherent abilities plus some social information pertaining to the influence of its competencies in the group dynamics.

A “semantic drift” bar chart is also helpful to discern the distribution of collective competencies. It demonstrates the final distribution of learned adjustments across all 19 competency dimensions while showing which perspectives emerged as relative experts in each area of expertise.

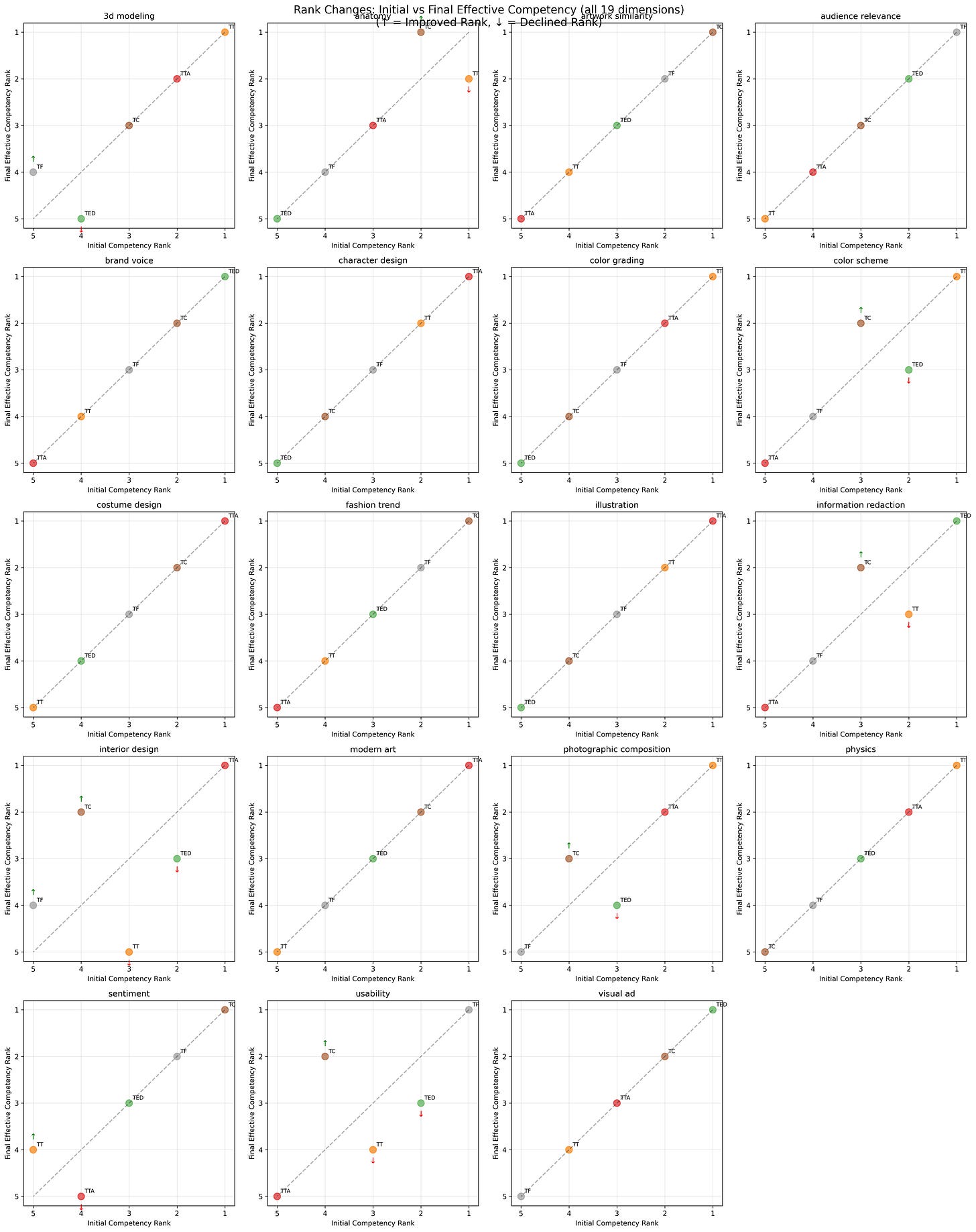

One of the more curious outcomes from such a system is the emergent swapping of relative expert rank that deviates from the pre-designed competency matrix. The cause of the swapping of rank is caused purely by the dynamics of the dimensional coupling and the relative impact of the perspective performance in the coupled context.

Here we compare the initial competency rankings (designed) versus final rankings (emergent) where diagonal lines indicate order preserved from the initial conditions, while crossings reveal where the system reorganized expertise through its own dynamics.

You can download the Python code and play with it yourself. Try more iterations, fewer iterations. Try adjusting the EMA alpha rate. Try changing the competency matrix.